Instagram intends to introduce nudity protection technology in messages

Instagram is working on a new tool that can protect users from nude photos sent in private messages, Meta representatives, which now owns Instagram, told The Verge.

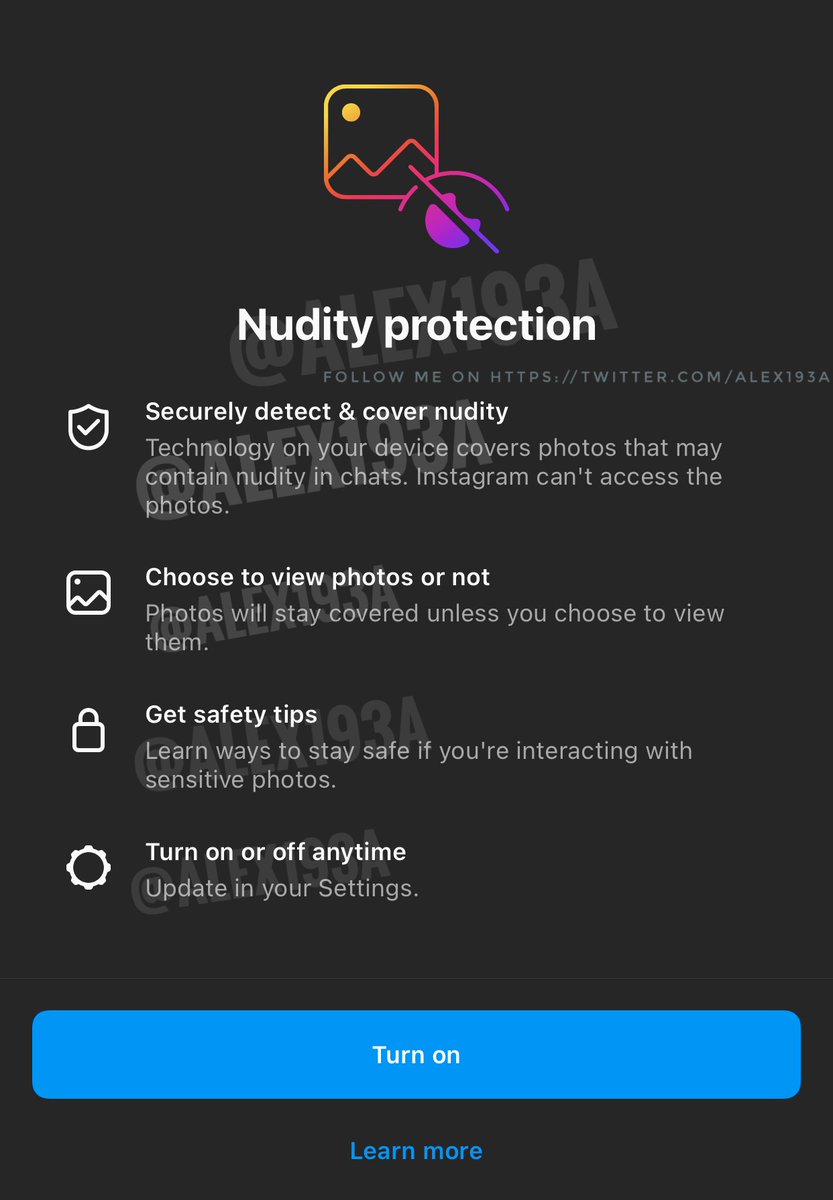

According to a tweet by insider Alessandro Paluzzi, the nudity protection technology covers photos in chats that may contain nudity and gives users the choice to view those photos or not.

According to Meta, the goal of implementing the new technology is to help shield people from unsolicited nude images or other unwanted content.

As additional protection, the company cannot view the images or share them with third parties. The new feature will be similar to the hidden words tool launched last year, which allows users to filter out offensive posts in DM requests based on keywords. If a request contains any filter word you choose, it will automatically be placed in a hidden folder, which you may never open.

Sending nude photos is considered a pretty hot issue these days, so the new feature will make many people happy.

According to a 2020 study by University College London, 75.8% of 150 young people between the ages of 12 and 18 received unsolicited nude photos.

- Related News

- Why it is recommended to download applications on trusted platforms: In 2023, Google rejected publication of more than 2 million dangerous applications on Google Play

- How do hackers use public Wi-Fi hotspots to intercept data and how to protect yourself from them?

- What risks are hidden in keyboards of Android smartphones?

- Hackers attack more than 19 million Russians with Android smartphones

- iPhone users are advised to disable iMessage: What risks are hidden in it?

- Why it might not be a good idea to close background apps on Android?

- Most read

month

week

day

- Internet 500 times faster than 5G tested in Japan: It allows to transfer five movies in HD resolution in one second 895

- Which smartphones will be the first to receive Android 15? 782

- Great value for money: 3 best Realme smartphones 773

- WhatsApp receives two new features 712

- 10 most powerful Android smartphones in April 590

- How to realize that your smartphone is hacked and you are being surveilled? 590

- Due to anomalies of Orion spacecraft, lunar exploration program may be delayed for years։ NASA 568

- Wheel of Death: new method will help astronauts stay fit in low gravity 553

- 7 powerful flares were registered on Sun in one day: Will they cause a magnetic storm? 545

- Key to conquering the Red Planet: Why is NASA studying solar storms on Mars? 527

- Archive